Please, support PV!

It allows to keep PV going, with more focus towards AI, but keeping be one of the few truly independent places.

It allows to keep PV going, with more focus towards AI, but keeping be one of the few truly independent places.

Pro: AVCHD Quantization process

-

@cbrandin

Thanks for ideas, Chris.

Did you try 1080i and 1080p at high bitrates and look at quantization values?

I don't know how they could be the same as I frame size also increases. -

Chris, one additional thing.

We clearly need to make StreamParser able to show how bitrate is distributed in AVCHD part. -

The problem is that StreamParser hasn't got any video stream analysis parts in it (it's all transport stream based) - and writing all that is a very big deal. StreamEye does have that capability, and for $50. I wrote StreamParser to do what other programs couldn't, I really don't want to replicate Elecard's efforts, especially since they have dropped the price to $50. We are talking hundreds of hours of work here - I'd rather do other things.

Chris -

I have been testing lowering the minimum quantization value. This is not incorporated into PTool yet; Vitaliy made a special patch for me to test.

I tested three scenarios (all in the 24H mode): Panasonic factory settings, Vitaliy's suggested 42000000 setting for 24H, and finally Vitaliy's 42000000 setting plus the minimum quantization value lowered from the default value of 20 down to 10. The test chart was an image containing gray, red, green, blue, cyan, magenta, and yellow. Each stripe was a gradient going from full saturation to white.

Chart:

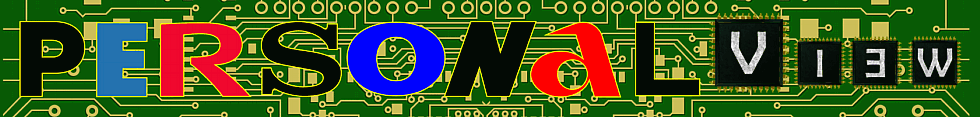

Vector Scope of factory 24H settings:

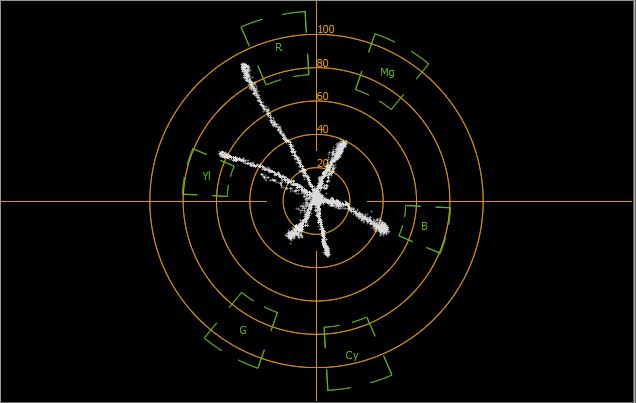

Vector Scope of PTool suggested 42000000 for 24H settings:

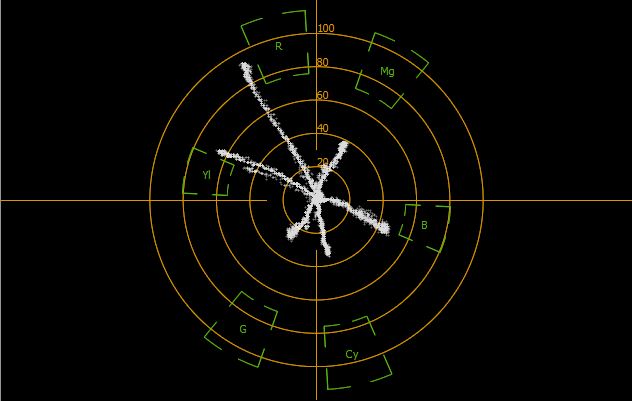

Vector Scope of PTool suggested 42000000 plus quantization minimum set to 10 for 24H settings:

It appears that a lower quantization value does improve color fidelity (which means less blocking). Notice that the yellow axis shows a significant color artifact in all but the quantization=10 captures.

These are just the static tests, I plan to do some dynamic testing later.

[UPdate] The quantization minimums don't seem to be changing as much as I expected. Maybe they won't with static scenes. So, at this point I'm still analyzing exactly why the color fidelity is improved with this patch.

Chris

Gradation Test Chart.jpg600 x 424 - 33K

Gradation Test Chart.jpg600 x 424 - 33K

Factory-20QMin-24H.jpg636 x 403 - 32K

Factory-20QMin-24H.jpg636 x 403 - 32K

42M-20QMin-24H.jpg632 x 401 - 29K

42M-20QMin-24H.jpg632 x 401 - 29K

42M-10QMin-24H.jpg636 x 402 - 30K

42M-10QMin-24H.jpg636 x 402 - 30K -

Great work, Chris. I'm glad someone is approaching this GH2 testing methodically.

Forgive a newbie question, and don;t let me distract you from your work, but what exactly is being quantized in these tests?

A quick survey of the h.264 literature via Google suggests to me that it is the pixel data in the frequency domain. (This may not be the correct terminology; I'm trying to translate from my understanding of FFT in audio DSP work.)

[EDIT] - I suppose you;re varying this parameter (described in http://www.pixeltools.com/rate_control_paper.html) :

"In particular, the quantization parameter QP regulates how much spatial detail is saved. When QP is very small, almost all that detail is retained. As QP is increased, some of that detail is aggregated so that the bit rate drops – but at the price of some increase in distortion and some loss of quality."

Anyway good luck with your interesting tests!

-bruno

-

iI think I figured out why the color fidelity with higher bitrates is better, even though the minimum quantization value is not lower. It's because, even though the minimum is the same, a higher proportion of the macroblocks in I frames are coded at that minimum value. It looks like the codec is smart enough to know that yellow is the color eyes are least able to resolve - so it bails on that color first, which is why the biggest difference is in the yellows.

As the codec goes from top to bottom in a frame, if it runs out of bandwidth, items low in the frame are sacrificed. I've seen lots of footage where mud, bad colors, macroblocking, etc... is more prevalent in the lower parts of frames.

Chris -

@cbrandin - Interesting observation about the eyes' ability to resolve yellow. However, if the encoder is detecting yellow solely on the basis of hue, I'd anticipate a problem with dark shades of yellow, i.e. yellowish-brown. The eye can discriminate dark shades with much more clarity than it has for bright yellow.

-

Actually, that doesn't really make sense either. It's the test with the lower Q value that is the best. The problem is that it was only used in the top part of the very first frame. I'll have to repeat this test. Who knows, maybe my cat walked in while I was running the last test and changed the light reflected on the target slightly.

Chris -

@Vitaly_Kiselev ok will start tests again. (ps I was only leaving Quantizer table to 1080p24 Setting Low and travelling through the Scaling tables one at a time with this test. ie leaving everything else alone. Do you prefer GOP, and bitrates to be put back to 'Suggested Values'?)

Can someone talk to me about how the variable Quantizer's scaling tables will affect Quantizer tables settings in Ptool? Doing some methodical quant testing now on 24p.

I take it the scalers give a percentage reduction in the bitrate over the standard Quant table's use if employed?

Been reading this to try and understand things;-

http://www.vcodex.com/files/H264_4x4_transform_whitepaper_Apr09.pdf

Update: first test roughly a minute over same scene, using Kae's 3 GOP on 24P setting with;-

(pic 1) Quantiser Table set to 1080p24 Setting Low, Q Scaling Table = Scaling T1, Auto ISO

(pic 2) Quantiser Table set to 1080p24 Setting Low, Q Scaling Table = Scaling T1, ISO 1600

(pic 3) Quantiser Table set to 1080p24 Setting Low, Q Scaling Table = Scaling T2, Auto ISO

(pic 4) Quantiser Table set to 1080p24 Setting Low, Q Scaling Table = Scaling T2, ISO 1600

Kae-Butt setD Qaunt Table 1080p24 Setting Low and Scaling T1 checked with Auto ISO.png1297 x 685 - 86K

Kae-Butt setD Qaunt Table 1080p24 Setting Low and Scaling T1 checked with Auto ISO.png1297 x 685 - 86K

Kae-Butt setD Qaunt Table 1080p24 Setting Low and Scaling T1 checked with ISO1600.png1304 x 688 - 87K

Kae-Butt setD Qaunt Table 1080p24 Setting Low and Scaling T1 checked with ISO1600.png1304 x 688 - 87K

Kae-Butt setD Qaunt Table 1080p24 Setting Low and Scaling T2 checked with Auto ISO.png1295 x 686 - 105K

Kae-Butt setD Qaunt Table 1080p24 Setting Low and Scaling T2 checked with Auto ISO.png1295 x 686 - 105K

Kae-Butt setD Qaunt Table 1080p24 Setting Low and Scaling T2 checked with ISO 1600.png1295 x 682 - 85K

Kae-Butt setD Qaunt Table 1080p24 Setting Low and Scaling T2 checked with ISO 1600.png1295 x 682 - 85K

Q Table low and T2 settings.txt538B

Q Table low and T2 settings.txt538B -

@driftwood

First, do not change GOP or anything else other than one parameter.

Second, fix ISO.

Always attach your settings or list them complete, as I can't understand anything from your tests. -

@Vitaliy_Kiselev Is it ok to leave my GOP setting at 3 (as in Kae's settings). All Im going to do is leave Quantiser table on 1080p24 Setting Low, and, then process thru all the Quantisation Scaling tables one at a time. Or this pointless?

-

@driftwood

I can't understand that you are doing. Absolutely.

You changed GOP, and vary ISO, bunch of other settings.

It is useless results.

Most probably it is better for you to use http://www.personal-view.com/talks/discussion/335/pro-gh2-avchd-encoder-settings

-

@driftwood

May be, but for me it is no use as I can't understand this.

I repeat. Do not touch anything, uncheck all video related patches, change one setting only. -

I don't know if it would help but there is are a number of charts from http://www.dsclabs.com/ that might be useful for this kind of testing if anyone has access to one.

-

Does anyone have more info on the GH2's interpretation of Quantisation tables and scalers yet? e.g. in ptool settings does high settings = 51 end of the range, low settings = bottom end 1 ?

So to remind ourselves, 1 gets the best quality/worse compression, and 51 getting the best compression/worse quality.

"...GOP Pattern 7 = GOP length 12 giving IBBP. Each video frame is divided into M macroblocks (MB) of 16 × 16 pixels (e.g., M = 99 for QCIF) for DCT based encoding. The DCT transformation is performed on a block (i.e., subdivision of a macroblock) of 8×8 pixels. We denote the quantization scale of the encoder by q. The possible values for q vary from q = 1, 2, . . . , 31 for MPEG4 and q = 1, 2, . . . , 51 for H.264/AVC..."

Some more info in http://mre.faculty.asu.edu/VDcurve_ext_Jan05.pdf -

A short thought:

The "Encoder Settings" look to me like scaling factors for quantisation tables.

720p and 1080i/p seem to be addressed each with 4 progression stages

to control kind of a 4-step adaptive matrix.

The easier-to encode progressive format start with lower factors for temporally smooth content: 720p (2) 1080i/p (3). The lowest 1080 stage (3) can be shared by both i and p.

(A temporally smooth picture does not differ much in 1080i or p.)

The more temporal ripply content becomes, the more quantisation factor has to rise,

harder for i than for p.

Seeing the (17) for 1080i it becomes obvious that 1080i is harder to encode than 1080p, and would need more bits. So in consequence this has to compensated by even higher quantisation factors to have the same bitrate results.

The last factor for 1080i is 17. Why 17?

I am looking for known H.264 restrictions and correlation in integers first

and find:(17)*3=51.

Now multiplied by 3 we see 51, this would resemble the highest quantisation factor implemented in this encoder. And (2)*3 would mean the lowest quantisation factor of 6, maybe a comfortable value for temporal smooth 720p content.

Furthermore it can be explained now why the encoder working at 720p and with lowest quantisation factors together with comfortably higher given bitrates sees no need to insert B-frames.

The encoder simply does not need to save any bits here and produces P-Frames only (beside the occasionally I-frame at GOP boundaries).

This can be useful to have higher quality and easier encoding/decoding.

On the other side forcing a 1080i encode to have only a last factor of 4 instead of 17 violates either bitrate restriction or forces too many bits.

So that encoder freezes, or SD card can not keep up. This is not useful at all.

I would leave these controls alone at first, rather concentrate on the buffer controls.

While implementing x264 it became obvious how important proper buffer implementation and following HRD model was, for getting broader playback compliance. -

-

@driftwood

You do not understand that you are doing. :-)

I know that you want to help very much, so, I'll try to explain your error.

Right now all your settings are identical, absolutely.

You need to check 1080p24 setting high and change it to each option (except original) sequentally and test. Check that you record in 1080p24 24H mode on camera.

Camera must be fixed on tripod aimed at well lit and static scene. -

@Vitaliy_KiselevJuly

As in

Test 1080p24 Settings High> Scaling T1

Test 1080p24 Settings High> Scaling T2

Test 1080p24 Settings High> Scaling T3

etc...

Then..

Test 1080p24 Settings Low> Scaling T1 etc.. etc..

? -

@driftwood

Nope.

1080p24 Settings High > 1080p24 Settings Low etc.

You can't go wrong, as all you need is select item from dropdown list. -

@VK Ok, I think I get what you need. Incidentally, if you could announce which parts need testing /verifying - organising the testers more we could cover much better ground and quicker? Even if it means only you and a few others know what the results mean.

PS Sorry for the misinterpretation but I want to help you get the best out of the GH2 :-)

So my first test would be this?

checkbox 1st test.png380 x 624 - 32K

checkbox 1st test.png380 x 624 - 32K

Start New Topic

Howdy, Stranger!

It looks like you're new here. If you want to get involved, click one of these buttons!

Categories

- Topics List23,993

- Blog5,725

- General and News1,354

- Hacks and Patches1,153

- ↳ Top Settings33

- ↳ Beginners256

- ↳ Archives402

- ↳ Hacks News and Development56

- Cameras2,368

- ↳ Panasonic995

- ↳ Canon118

- ↳ Sony156

- ↳ Nikon96

- ↳ Pentax and Samsung70

- ↳ Olympus and Fujifilm102

- ↳ Compacts and Camcorders300

- ↳ Smartphones for video97

- ↳ Pro Video Cameras191

- ↳ BlackMagic and other raw cameras116

- Skill1,960

- ↳ Business and distribution66

- ↳ Preparation, scripts and legal38

- ↳ Art149

- ↳ Import, Convert, Exporting291

- ↳ Editors191

- ↳ Effects and stunts115

- ↳ Color grading197

- ↳ Sound and Music280

- ↳ Lighting96

- ↳ Software and storage tips266

- Gear5,420

- ↳ Filters, Adapters, Matte boxes344

- ↳ Lenses1,582

- ↳ Follow focus and gears93

- ↳ Sound499

- ↳ Lighting gear314

- ↳ Camera movement230

- ↳ Gimbals and copters302

- ↳ Rigs and related stuff273

- ↳ Power solutions83

- ↳ Monitors and viewfinders340

- ↳ Tripods and fluid heads139

- ↳ Storage286

- ↳ Computers and studio gear560

- ↳ VR and 3D248

- Showcase1,859

- Marketplace2,834

- Offtopic1,320